Land Of Youth (September 22 2022)

My first full-length solo album in about a decade. All pre-release Bandcamp proceeds were donated to the International Committee of the Red Cross.

Fuse Factory (December 3 2021)

A live (remote) improvised synth and electric guitar performance with DSLR footage (shot of Alexandria, VA) for Fuse Factory in late 2021, the basis for which will form the crux of a collaborative record with Jay Dickson in 2023.

Improvisation for Appalachian Dulcimer and Audio Effects Processing (May 28 2020)

A Brief History of Pitch Pines (Signals Under Tests Remix; April 19 2020)

"A Brief History of Pitch Pines Signals Under Tests Remix" is a response to audio samples provided by film composer, Paul Vinsonhaler. Paul is releasing his own master of this track under the title, "A Brief History Of Pitch Pines." This version was mastered by Jake Fader.

Land of Youth (May 1 2020) – FP Creative Remote Collaboration Project

I used Lempner’s recording, the beginning of Stuligross’s percussive recording and Cloyd’s spoken word recording. I used elastic audio and time stretching techniques to process Stuligross’s material to create large textures and I used simple layering and repitching techniques to process Lempner’s material to create droning harmonies. The arrangement is laced with heavy tones and ethereal and broken guitar loops, as per the usual case. The piece begins with a woodwind pedal point, courtesy of Lempner, along with a granular take on Stuligross’s offerings. The aggressive percussive strikes also come from Stuligross. The wash of white noise throughout the piece was achieved by excessively time-stretching Lempner’s open instrumental attack. Cloyd’s spoken word closes out the piece.

Dualism (March 6 2020)

Released in 2020 on Hivemind Records (UK). Jaipongan materials (provided by Riedl) formed the basis of a disparate, beat-driven composition. Vocal and rebab samples were processed using granular synthesis and elastic audio tools.

Disrupt/Construct (2019) Studio Album

Graham collected musical responses to a series of his own guitar improvisations from Neidhardt over the period of a year. Graham then crafted this album at the Elektronmusikstudion EMS in Stockholm, Sweden. Disrupt is a series of buckling themes that function as self-contained, shorter musical compositions. Construct presents a semi-improvised stream-of-consciousness piece that stands in contrast to the disruptive nature of its counterpart and divides into three self-contained musical movements.

· Vinyl edition limited to 100 copies

· Listen: www.denovali.com/n

· LP: www.denovali.com/store

· Download: www.denovali.com/digitalstore

[release date: December 13 2019 | Denovali Records (GER)]

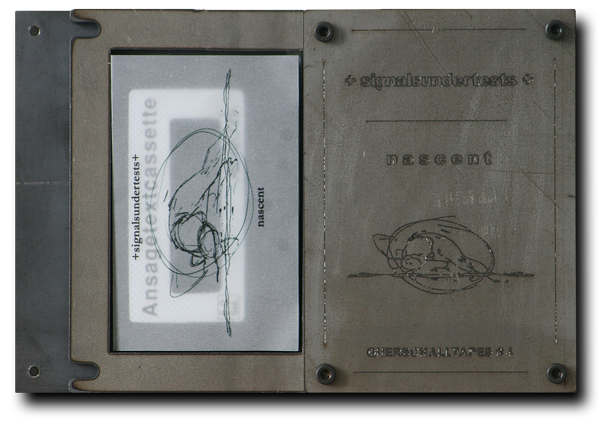

Querschalltapes (2013) Endless cassette: 60 second loop

Limited, hand-numbered edition 49 pieces.

Engraved metal packaging.

Price: 35 €

plus

€ 4.10 postage (domestic),

€ 8.90 (EU)

€ 15.90 (Worldwide)

Aidan Baker – Origins & Evolutions (2012)

Scored / prepared guitar materials were contributed to this record by a slew of guitar players for this release on Install (NYC) in 2012.

Nascent (2012) Studio Album

"Nascent" was released in 2012, featuring a series of ambient works collected throughout 2010 and 2011. The disc features collaborative efforts from Michael Andrews and Laura Graham. Philip Byrne mastered this disc whilst wearing a tin foil hat (his words, not mine). The disc artwork is by Tennessee based Artist, Jon Kenney. Music from "Nascent" has featured on MTV and in independent films.

Mecca (2010) Studio Album

"Mecca," was released in late 2010, featuring Artists from the US, Japan, Germany, and Finland, and was featured in Guitar Player Magazine, Guitar International Magazine and on regional and national TV (Ch. 5) and radio (BBC Radio 1).